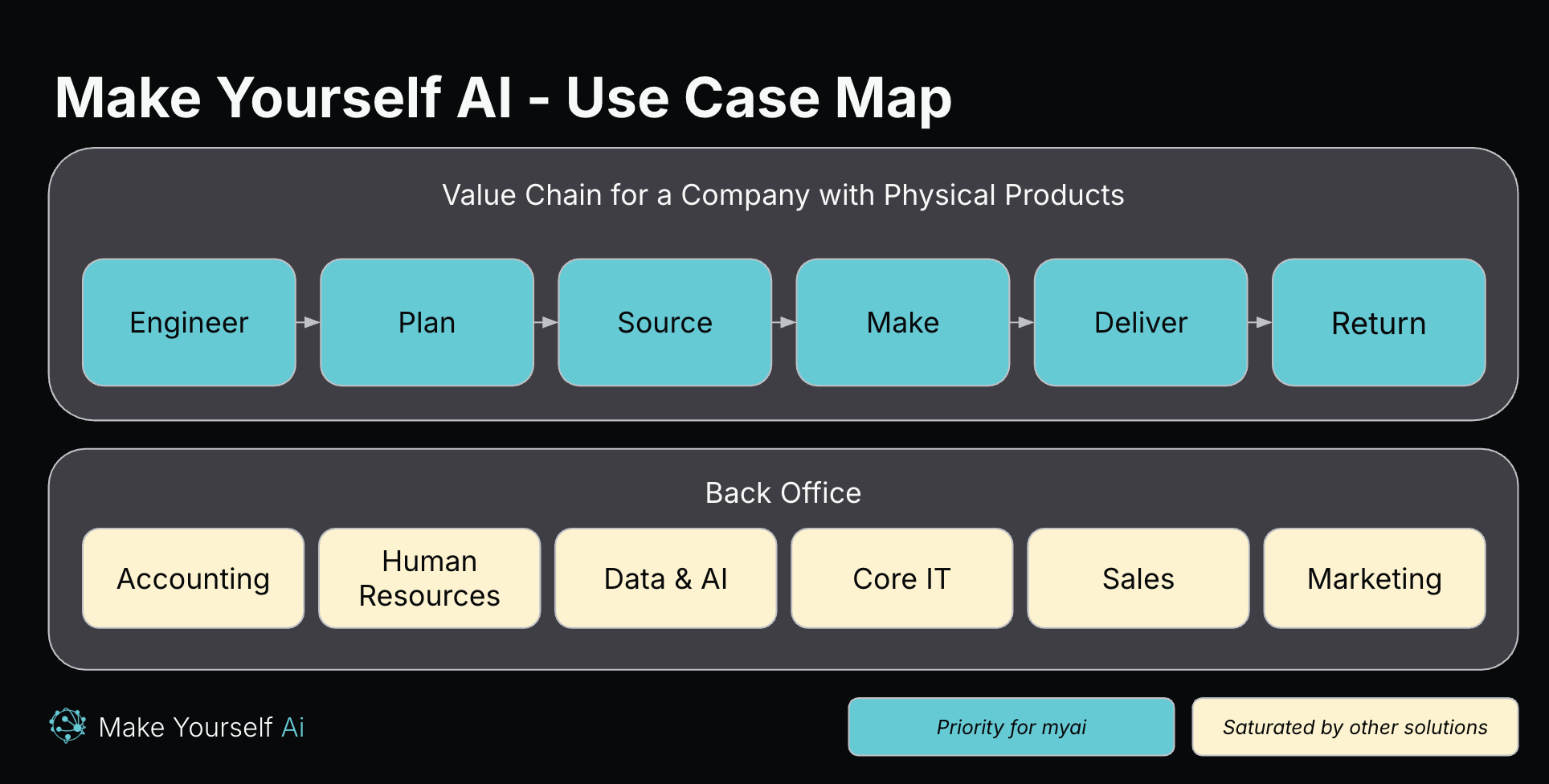

Look at any agentic AI website and you'll see the same four use cases: outbound marketing, customer support chatbots, meeting summaries, code generation.

These are the easy problems. Standard patterns, clear inputs and outputs, low stakes when the AI gets it wrong. They're valuable. They're also commoditized by design, because every vendor is selling roughly the same workflow.

The easy-problem trap

The AI industry has optimized for problems that are easy to demo. An AI that writes a cold email in three seconds makes a great product video. An AI that summarizes a meeting and extracts action items doesn't need much context for the value to land.

Those are also the problems where AI creates the least durable advantage. If every company has the same AI writing the same cold emails, the advantage lives somewhere else.

The hard problems look different.

The hard problems are coordination problems

Look at any company that builds physical products for customers who depend on them. The work cuts across at least three functions: manufacturing, engineering, and supply chain. Each function has its own system (MES, PLM, ERP), its own vocabulary (defect rate vs. nonconformance vs. supplier escape), and its own pressure (ship the line vs. release the design vs. protect working capital).

When the company is running well, these three functions agree on what's happening and what to do next. When it isn't, they don't. The veteran who can reconcile them, usually a quality engineer or a senior planner who has worked all three sides, is the most valuable person in the building.

That veteran is the bridge. The bridge is technology (knowing which system to query), process (knowing the order of operations when a problem lands), and people (knowing who decides what, and when to escalate). All three at once.

Two patterns show up over and over.

Reactive example: quality escapes

A defect ships. The customer calls. Now you walk it back, in this order: which work order produced that part, which operator ran it, which machine, which drawing revision was in effect, which supplier lot the raw material came from, whether that supplier had a recent process change, and whether the same failure mode has shown up before on a different work order or customer.

That's manufacturing data (MES), engineering data (PLM), and supply chain data (ERP, supplier scorecard) in one investigation. Plus tribal knowledge nobody wrote down: this supplier always runs hot the week after a holiday shutdown, this customer measures the outer diameter differently than the rest of our book, we changed the heat treat last March and the spec never got updated.

The veteran walks it back in twenty minutes. Anyone else takes two days, and probably misses the supplier-side root cause because they don't think to look there.

Proactive example: engineering change requests

The customer tightens a spec. Engineering updates the drawing. That sounds like an engineering decision until you ask the next four questions: how much existing inventory still meets the old spec but not the new one; which in-flight work orders need to be re-routed or scrapped; which suppliers need to be requalified at the new tolerance, and how long that takes; how the design change interacts with the maintenance contract the customer signed last quarter.

Each function has a clean answer to its own question. None of them has the full picture. The veteran does the routing: owns the question, sequences the answers, brokers the hand-offs. Without that routing, the change request sits in someone's queue for two weeks, then ships with a forty-cent margin hit nobody noticed.

Why generic AI can't solve these

The standard enterprise AI pitch is: connect to your data, train a model, deploy an agent. That works for problems where the data tells the whole story.

In a coordination problem, the data is maybe 30% of the story. The rest lives in tribal knowledge, expert judgment, and the working relationships between the people who own each system. None of it is in a database.

A generic AI on your data will generate reports, flag anomalies, extract patterns. Useful outputs. Just not the work the veteran does. The veteran is deciding which system to query next, in what order, against which constraint, given who's on the hook. That decision is the work. That's what has to be mirrored.

What this looks like in practice

Start with the veteran. The systems come later. Sit through five real incidents. Write down what they checked, in what order, with what filter, and which judgment call they made when the data was ambiguous. That sequence is the agent's playbook.

Then connect the systems the agent will reach into. MES for the work order. PLM for the drawing. ERP for the supplier lot. CRM for the customer's measurement convention. The playbook says what to ask for. The systems know where it lives.

Now any engineer in the building can walk a quality escape back in twenty minutes. The AI didn't replace the veteran. It puts the veteran's routing in the hands of everyone who needs it.

The hard problems are worth it

It's harder to build. Harder to demo. Harder to put in a 30-second video.

It's also harder to commoditize. And the problems it solves are worth orders of magnitude more than another automated email.

That's what we're building at Make Yourself AI.